Have you ever had that sense of dread on discovering your favourite novel is going to be a movie or a TV series? Fans of CJ Sansom’s books have been divided on the adaptation of their favourite historical novels about a hunchbacked lawyer during the Dissolution of the Monasteries. Some have been delighted by what they’ve seen, and felt the four episodes of Shardlake on Disney+ were true to the original books. Others were appalled.

The originals books are greatly loved. On The Rest is Entertainment podcast, Richard Osman read out comments from his own mother about how and why she loved CJ Sansom’s book so much. I was not so captivated. I read the first book, Dissolution, some years ago and liked it. But I didn’t like it enough to read more.

So when the TV adaptation landed on Disney+ I was curious. My own reaction was relief that CJ Sansom had passed away only days before his first novel arrived in our living rooms. Sansom was committed to historical accuracy and authenticity. The TV Series? Not so much.

But Shardlake is entertainment for the masses, not the bookish. Why shouldn’t sixteenth century monks have incredible teeth? Why shouldn’t they burn candles by the dozen in every room of the monastery, day and night, despite the fact that candles were eye-wateringly expensive back then? And yes, these monks should be going to church at least nine times a day, and spend hours in prayer and private study. But who really wants to watch that? This isn’t Wolf Hall on BBC2. This is mainstream global streaming TV: the Disneyfication of the Monasteries.

Given the differences in the media, why are both versions of Shardlake so successful? The secret sauce is the hunchback himself, Shardlake.

As a screenwriter myself, I know all too well that the dynamics of twenty-first century television – aka ‘content’ – and novels are very different. (My failed novels have reinforced this lesson for me). Shardlake has to appeal to an international audience who have not read, and will never read, CJ Sansom’s books. They won’t even listen to Tom Holland and Dominic Sandbrook talk about the Dissolution of the Monasteries on The Rest is History podcast.

Novels are fairly cheap to print. TV is expensive, burning money faster than the monks of St Donatus can burn candles. Shardlake is international TV, financed internationally and filmed internationally. Consequently, you are not looking at the Kent countryside. You are looking at Hungary, Austria and Romania for a mixture of reasons. Mostly these would be tax breaks, cheaper crews and financial incentives.

St Donatus’s monastery is a mash up of the medieval Kreuzenstein Castle near Vienna and the gothic Hunedoara Castle in Transylvania. It looks brilliant. It just does not in any way resemble a medieval monastery – which were surprisingly uniform through Europe. The chapel at the monastery is comically small, whereas there would, in real life, be a hilariously large abbey.

The New Stateman said, “This is not Merrye Englande. It is the Grand Anywhere we’ve come to know all too well in the age of streaming, and it bores me to death, my eyes unable to stick to it,” which seems a little over dramatic. Most of the reviewers were unconcerned by this lack of historical accuracy. The Guardian called it ‘magnificent’, others ambivalent. It scored about 80% on Rotten Tomatoes with both the critics and the audience. Overall, Shardlake has been a hit.

Given the differences in the media, why are both versions of Shardlake so successful? The secret sauce is the hunchback himself, Shardlake. He is the sleuth, trying to solve the murder of a fellow commissioner in the service of the King’s ruthless right-hand man, Thomas Cromwell. The recipe for the Shardlake sauce is made up of two key ingredients.

Shardlake bears his cross with fortitude, not bitterness.

The first is his goodness. It seems like a bland attribute, but it’s rather refreshing, especially in a world divided both then and now. Shardlake is not complex character with inner demons. (At least that’s not how he’s presented in the first book or this adaptation.) When I read the book, my abiding memory is that Shardlake was one of the good guys. This was surprising at the time as normally Protestants were seen Philistines and cultural vandals who cynically changed their theology to strip the church of its wealth, before passing the churches on to their descendants who smashed the statues, whitewashed the walls and, eventually, cancelled Christmas. Shardlake may be in the service of Thomas Cromwell, but he knows in his heart of hearts that Anne Bolyen was innocent of the crimes for which she was beheaded. And in some small way, he makes amends for this.

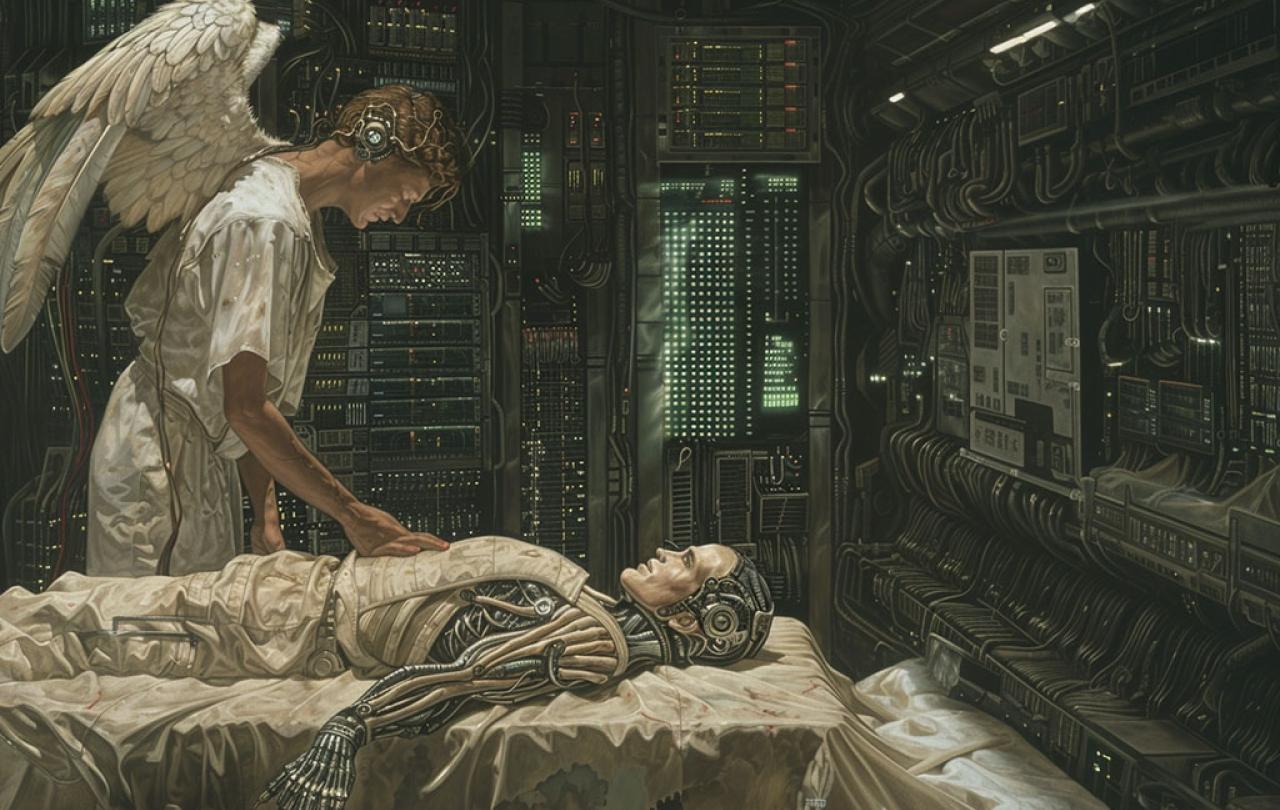

But Shardlake’s goodness is only half of the recipe. The other half is his hunched back. In the sixteenth century, this makes him an object of ridicule and shame. It is not flipped around to become a strength. It is an affliction with which he has to cope. Given Shardlake’s world steeped in religion, we are reminded of the ministry of Jesus, who attracted the sick, the crippled, the lepers and the blind. They were, of course, healed and Shardlake is not so fortunate.

Shardlake bears his cross with fortitude, not bitterness. Likewise, Jesus Christ himself bore his cross as a victim of injustice on trumped up charges, beaten and executed as one cursed alongside criminals, saying ‘Father forgive’. Shardlake, like Christ in the gospels, is a suffering servant. And now Disney may see the Gospel According to Shardlake spreading all over the world.