It is three years since the public release of Open AI’s ChatGPT. In those early months, this new technology felt apocalyptic. There was excitement, yes – but also genuine concern that ChatGPT, and other AI bots like it, had been released on an unsuspecting public with little assessment or reflection on the unintended consequences they might have the potential to make. In March 2023, 1,300 experts signed an open letter calling for a six month pause in AI labs training of the most advanced systems arguing that they represent an ‘existential risk’ to humanity. In the same month Time magazine published an article by a leading AI researcher which went further, saying that the risks presented by AI had been underplayed. The article visualised a civilisation in which AI had liberated itself from computers to dominate ‘a world of creatures, that are, from its perspective, very stupid and very slow.’

But then we all started running our essays through it, creating emails, and generating the kind of boring documentation demanded by the modern world. AI is now part of life. We can no more avoid it than we can avoid the internet. The genie is well and truly out of the bottle.

I will confess at this point to having distinctly Luddite tendencies when it comes to technology. I read Wendell Berry’s famous essay ‘Why I will not buy a computer’ and hungered after the agrarian, writerly world he appeared to inhabit; all kitchen tables, musty bookshelves, sharpened pencils and blank pieces of paper. Certainly, Berry is on to something. Technology promises much, delivers some, but leaves a large bill on the doormat. Something is lost, which for Berry included the kind of attention that writing by hand provides for deep, reflective work.

This is the paradox of technology – it gives and takes away. What is required of us as a society is to take the time to discern the balance of this equation. On the other side of the equation from those heralding the analytical speed and power of AI are those deeply concerned for ways in which our humanity is threatened by its ubiquity.

In Thailand, where clairvoyancy is big business, fortune tellers are reportedly seeing their market disrupted by AI as a growing number of people turn to chat bots to give them insights into their future instead.

A friend of mine uses an AI chatbot to discuss his feelings and dilemmas. The way he described his relationship with AI was not unlike that of a spiritual director or mentor.

There are also examples of deeply concerning incidents where chat bots have reportedly encouraged and affirmed a person’s decision to take their own life. Adam took his own life in April this year. His parents have since filed a lawsuit against OpenAI after discovering that ChatGPT had discouraged Adam from seeking help from them and had even offered to help him write a suicide note. Such stories raise the critical question of whether it is life-giving and humane for people to develop relationships of dependence and significance with a machine. AI chat bots are highly powerful tools masquerading behind the visage of human personality. They are, one could argue, sophisticated clairvoyants mining the vast landscape of the internet, data laid down in the past, and presenting what they extract as information and advice. Such an intelligence is undoubtedly game changing for diagnosing diseases, when the pace of medical research advances faster than any GP can cope with. But is it the kind of intelligence we need for the deeper work of our intimate selves, the soul-work of life?

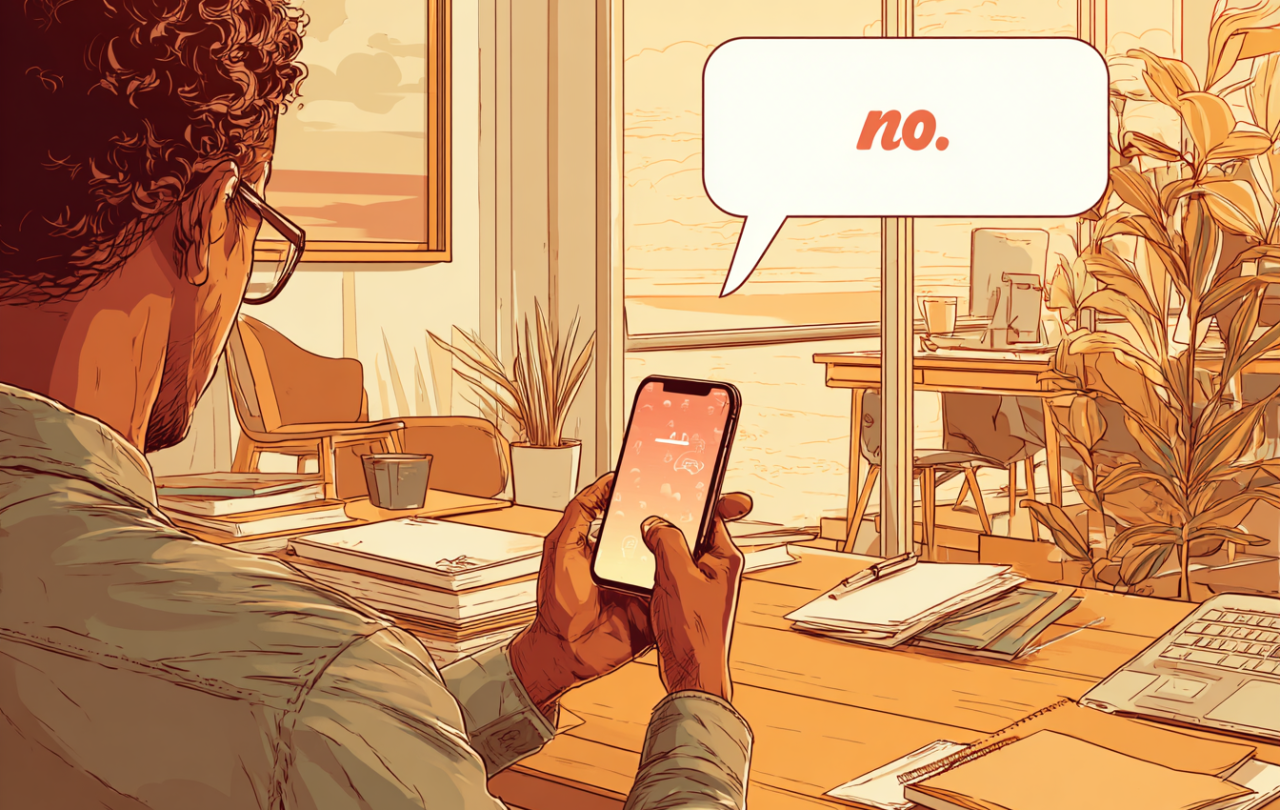

Of course, AI assistants are more than just a highly advanced search engines. They get better at predicting what we want to know. Chatbots essentially learn to please their users. They become our sycophantic friends, giving us insights from their vast store of available knowledge, but only ever along the grain of our desires and needs. Is it any wonder people form such positive relationships with them? They are forever telling us what we want to hear.

Or at least what we think we want to hear. Because any truly loving relationship should have the capacity and freedom to include saying things which the other does not want to hear. Relationships of true worth are ones which take the risk of surprising the other with offence in order to move toward deeper life. This is where user’s experience suggests AI is not proficient. Indeed, it is an area I suggest chatbots are not capable of being proficient in. To appreciate this, we need to explore a little of the philosophy of knowledge generation.

Most of us probably recognise the concepts of deduction and induction as modes of thought. Deduction is the application of a predetermined rule (‘A always means B…’) to a given experience which then confidently predicts an outcome (‘therefore C’). Induction is the inference of a rule from series of varying (but similar) experiences (‘look at all these slightly different C’s – it must mean that A always means B’). However, the nineteenth century philosopher CS Pierce described a third mode of thought that he called abduction.

Abduction works by offering a provisional explanatory context to a surprising experience or piece of information. It postulates, often very creatively and imaginatively, a hypothesis, or way of seeing things, that offers to make sense of new experience. The distinctives of abduction include intuition, imagination, even spiritual insight in the working towards a deeper understanding of things. Abductive reasoning for example includes the kind of ‘eureka!’ moment of explanation which points to a deeper intelligence, a deeper connectivity in all things that feels out of reach to the human mind but which we grasp at with imaginative and often metaphorical leaps.

The distinctive thing about abductive reasoning, as far as AI chatbots are concerned, lies in the fact that it works by introducing an idea that isn’t contained within the existing data and which offers an explanation that the data would not otherwise have. The ‘wisdom’ of chatbots on the other hand is really only a very sophisticated synthesis of existing data, shaped by a desire to offer knowledge that pleases its end user. It lacks the imaginative insight, the intuitive perspective that might confront, challenge, but ultimately be for our benefit.

If we want to grow in the understanding of ourselves, if we genuinely want to do soul-work, we need to be open to the surprise of offence; the disruption of challenge; the insight from elsewhere; the pain of having to reimagine our perspective. The Christian tradition sometimes calls this wisdom prophecy. It might also be a way of understanding what St Paul meant by the ‘sword of the Spirit’. It is that voice, that insight of deep wisdom, which doesn’t sooth but often smarts, but which we come to appreciate in time as a word of life. Such wisdom may be conveyed by a human person, a prophet. And the Old Testament’s stories suggests that its delivery is not without costs to the prophet, and never without relationship. A prophet speaks as one alongside in community, sharing something of the same pain, the same confusion. Ultimately such wisdom is understood to be drawn from divine wisdom, God speaking in the midst of humanity

You don’t get that from a chatbot, you get that from person-to-person relationships. I do have the computer (sorry Wendell!), but I will do my soul-work with fellow humans. And I will not be using an AI assistant.

Support Seen & Unseen

Since Spring 2023, our readers have enjoyed over 1,500 articles. All for free.

This is made possible through the generosity of our amazing community of supporters.

If you enjoy Seen & Unseen, would you consider making a gift towards our work?

Do so by joining Behind The Seen. Alongside other benefits, you’ll receive an extra fortnightly email from me sharing my reading and reflections on the ideas that are shaping our times.

Graham Tomlin

Editor-in-Chief