“Nobody thinks they’re the bad guy”. That’s a phrase I often use when helping people write situation comedies. It’s always useful to have a strong antagonist who gets in the way of our hero. But the villains tend not to consider themselves to be evil. In fact, they are offended at the suggestion.

The Batman universe has turned the interesting villain to new levels. The Penguin is Gotham’s latest production, a brand-new TV series on HBO. Colin Farrell plays a highly nuanced anti-hero, exploring The Penguin’s “awkwardness, and his strength, and his villainy, yes, his propensity for violence”. Farrell told Comicbook.com he was attracted to the role because “there's also a heartbroken man inside there you know, which just makes it really tasty.” Audiences are often invited to have sympathy for the devil. Should we be worried about the blurring of the lines between good and evil?

I’ve been asking myself this question as I’ve been writing a new animation which involves a villain called Dr Popp who is trying to take over a city. But what kind of villain do we want in 2024?

Jack Nicholson’s portrayal of The Joker back in 1989 feels like pop-culture ancient history. His Joker was an embittered agent of chaos without many redeeming qualities but mercifully lacked the nihilism of later versions. It was an old-fashioned story of cops and robbers which has its own simplistic charm. But have those days gone forever, having been shot in the head and dropped off a bridge into a river?

The problem is it is so easy to humanise evil. You just give it a human face. The arch-villains of the twentieth century – the Nazi members of the SS – are rather sweet when portrayed by comedians Mitchell and Webb. A nervous member of the SS Unit (Mitchell) waiting for an attack from the Russians looks at the skull on his cap and asks his fellow comrade-in-arms (Webb): “Hans, are we the baddies?”

Any student of World War Two will know that it’s never as simple as good versus evil. Many terrible things were done by people who felt justified in their behaviour. Moreover, ‘the goodies’ also felt compelled to do morally dubious things – like the bombing of civilians in cities – in order to defeat ‘the baddies. After all, they started it.’ The truth is always far more complicated than the war films suggest.

Dr Popp is the very worst kind of villain: he has great power and he wants to help. In his own mind, he’s completely clear about his mission.

Ten years ago, I was researching real life baddies for my sitcom Bluestone 42 about a bomb disposal team set in Afghanistan. At times, I had to think like the Taliban who, in their own minds, were entirely justified in leaving bombs by the side of the road, to be triggered by British soldiers or Afghan children. They were pretty relaxed about the outcome. It’s hard to sympathise with this way of thinking, but it made sense to them.

My internet search history from that time probably put me on some sort of Home Office watchlist. Maybe a small dossier was started on me. More recently, that dossier would have become thicker as I’ve moved sideways from sitcom into murder mysteries, having recently worked on Death in Paradise and Shakespeare and Hathaway. To work on shows like these, you need to be thinking of good reasons for good people to commit murder. Someone would need a very strong motive to commit a murder on an idyllic Caribbean island where the local detective has a 100 per cent resolution rate. You also need to research ingenuous methods for murdering people in a way that escapes detection. I’m surprised I’ve not yet had a knock on my door, or enquiries made to the neighbours to call a number if they see anything suspicious.

But what about cartoon villains where nothing is real? The bold colours and the larger-than-life characters might suggest that there is more clarity about goodies and baddies. But there isn’t. Evil villains – that is, villains who realise they are evil – are extremely rare. Skeletor from He-Man and the Masters of the Universe comes to mind. This kind of demonic baddie can be entertaining with wit and charm, like Hades in the Disney movie, Hercules. This character had some brilliant one-liners and was superbly brought to life by the voice of James Woods. Overall, however, purely evil characters are hard to write.

Cartoon villains need proper motivation. This is either a character flaw or a backstory. In The Lion King, Scar is consumed with envy that his brother is king – and a good one at that. In The Incredibles, Syndrome is playing out his sense of injustice that he was not allowed to be Mr Incredible’s sidekick, Incrediboy. In The Simpsons, Mr Burns is essentially Mr Potter from It’s a Wonderful Life. He’s a Scrooge-type figure who doesn’t care about love and respect. He just wants to own the town.

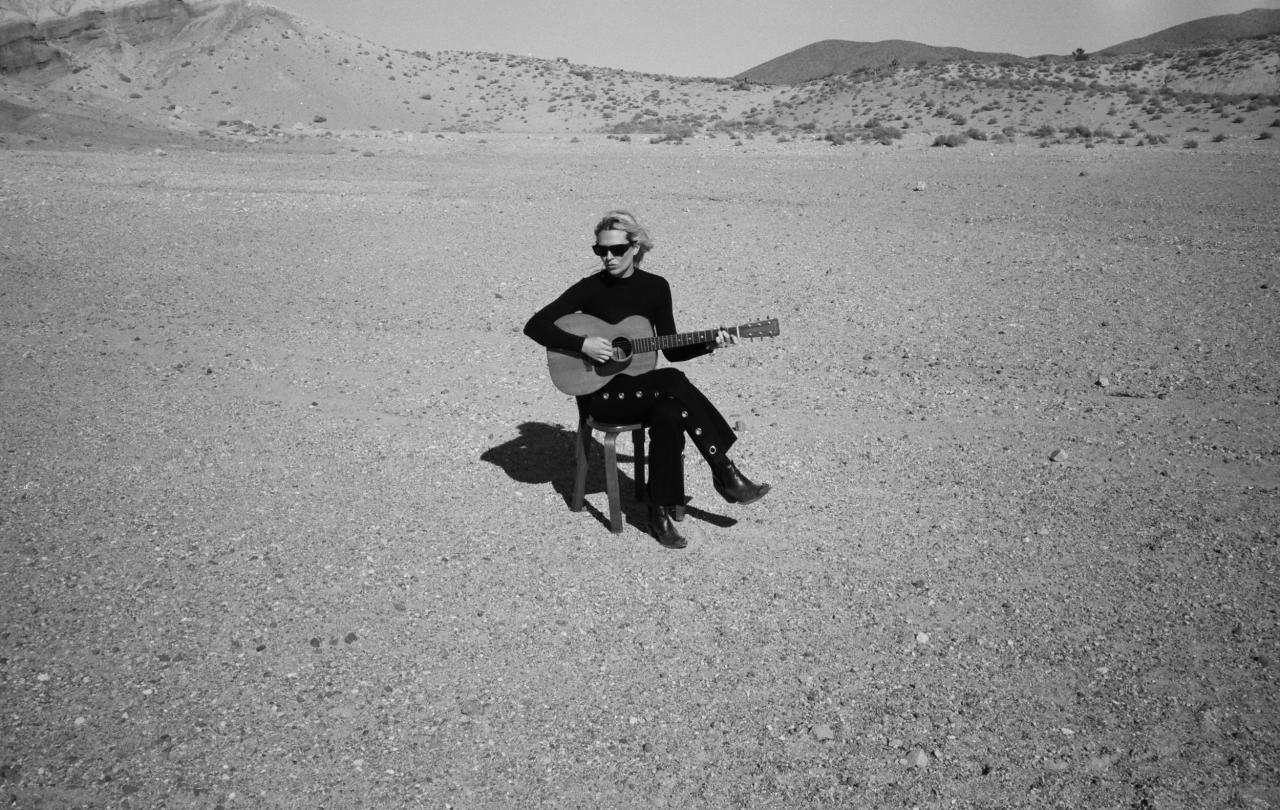

The cartoon villain I’ve been thinking about is for a new animation project I’ve been working on called Jazz Cow. The eponymous hero is a saxophone-playing cow and a reluctant Bogart-style leader of a bohemian band of misfits. They are trying resist the advance of the all-consuming algorithm created by Dr Popp, the villain. But what’s his motivation?

Dr Popp is the very worst kind of villain: he has great power and he wants to help. In his own mind, he’s completely clear about his mission. He’s trying to make the world better, easier, safer, cheaper, more efficient and convenient. Why would anyone want to refuse his technology, reject his software and keep away from his algorithm?

This is why Dr Popp has to silence Jazz Cow, literally, by stealing his saxophone. He simply cannot allow Jazz Cow to delight audiences at Connie Snott’s with live improvised music. There’s no need for this music! Dr Popp has all the music you could possibly need, want or imagine. Why improvise when we have artificial intelligence?

Dr Popp is a cartoon villain for today when relativism is still alive and well. ‘Good’ and ‘Evil’ are still concepts or points of view rather than absolutes. However, there is good and evil in Jazz Cow. But the evil doesn’t come from Doctor Popp. It comes from the user or consumer. That would be us.

‘The Algorithm’ is always learning and always trying to give us our hearts’ desire. And that’s the problem: our hearts frequently desire that which they cannot – and should not – have. Dr Popp’s algorithm is like a mirror held up to our faces. In it, we see the real baddie: ourselves. Not even Jazz Cow can save us from that. But what this horn-playing cow can do is to make the world a more humane place.

For more information about Jazz Cow, and information on how you can make the show happen, take a look at our Kickstarter – and don’t worry. Jazz Cow would approve, as it’s the creative’s way of sticking IT to the man.