In an interview with behind-the-scenes show Doctor Who Unleashed, returning showrunner Russel T Davies had this to say about how iconic Doctor Who baddie Davros was to be portrayed in a mini-episode produced for charity event Children in Need last year.

“We had long conversations about bringing Davros back, because he's a fantastic character, time and society and culture and taste has moved on. And there's a problem with the Davros of old in that he's a wheelchair user, who is evil. And I had problems with that. And a lot of us on the production team had problems with that, of associating disability with evil. And trust me, there's a very long tradition of this.”

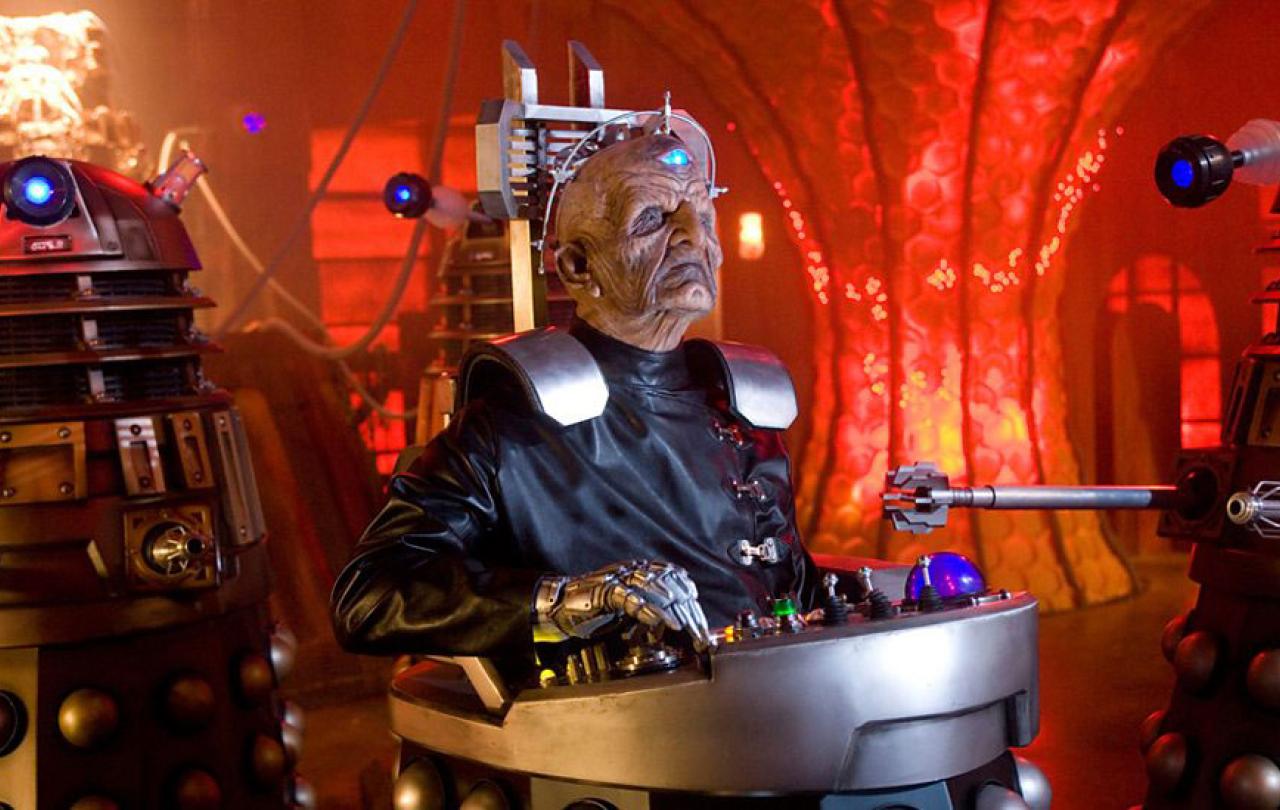

He continues to explain that this led the production team to depict Davros differently. Gone is the facial scaring, the wheelchair, the robotic eye, and the mechanical hand. Now, as Davies explains, Davros is seen through a lens in which disability stops being a way of identifying evil.

“This is our lens, this is our eye. Things used to be black and white, they’re not black and white anymore, and Davros used to look like that and he looks like this now.”

Davies’ comments caused somewhat of a split online with some fans. On the one hand, Davies is continuing a tradition that can be traced back to his previous work on Doctor Who between 2005 and 2010. For example, he purposefully wrote Billie Piper’s character Rose Tyler as working class to cut against the gain of the prim-and-proper received pronunciation of previous companion characters. Perhaps Davies was tired of the limited scope of once again portraying the villain as disabled. Just as he didn’t want another female companion who lacked agency and depth, depiction of Davros as disabled simply wouldn’t fit with this modern incarnation of the show. On the other hand, in his comments, Davies seems to suggest that if this character ever appears again, he will not be disabled, even if it contradicts previous storylines, retroactively removing this part of the character as if it was never there to begin with.

Davros isn’t evil because he’s disabled, so why is Davies so hellbent on changing something that wasn’t an issue to begin with?

But is Davies’ efforts necessary? Reddit user u/Bowtie327 suggests that Davros’ disability isn’t important, “I can’t say I ever even drew a connection around Davros, being evil, and being disabled”, whilst another user u/PenguinHighGround claims that as a disabled person themselves they found him “weirdly inspiring, his (sic) goals are abhorrent, but he didn’t let his physical issues limit him”. X user @Dadros3 highlights how, as a wheelchair user, Davros has become a sort of science-fiction icon. He euphemistically states that “evil comes in all forms, all races, all genders, all abilities, and all disabilities. We cannot stand by and allow the cancellation of something for fear of offence that doesn’t exist”.

We are starting to see where the conversation heads; there are worries of by simply removing disability from the equation no effort is made to necessarily further the cause of disabled representation in media. Similarly, Davros isn’t evil because he’s disabled, so why is Davies so hellbent on changing something that wasn’t an issue to begin with? Whether it's that Davros’ disability wasn’t noticed by a majority able-bodied audience, or that his evil ideology has nothing to do with being disabled, Davros should stay put!

What becomes clear is that the changes made to depicting Davros is a product of the philosophy of change that is woven into the show’s DNA.

There’s a nuance that I believe has been missed by these arguments, a nuance that speaks to the philosophy that underpins what has led Doctor Who to last so long. I do not believe that Davies is suggesting that we pretend that harmful depictions of disabled people didn’t happen. Rather, this is a progression of a core part of Doctor Who.

Doctor Who encompasses change. Whether it’s the titular character’s face changing every few years, new story motifs coming and going, or even entirely new production teams, change is what keeps the Doctor Who machine whirring. It is clear that in this new era of the show that Davies is looking for a sort of fresh start. That is what keeps Doctor Who alive, and I think it’s what can make it such a great show. The ability to, despite its long history, still tell a new story. Times where I think the show has suffered has been when it has tried too hard to emulate what has come before.

This is a good opportunity to look back at how disability has been characterised in the media. It is good to sit with this tension even if we didn’t notice it and even if we don’t necessarily take offence. Interestingly, in the brief discussions Davies has had in the behind the scene footage he never mentions offence, nor does he want to attribute blame onto anyone for depicting a wheelchair user in such a way. Instead, he looks forward, just as we do as an audience. Forwards to opportunities to encapsulate the real lived experiences of disabled people, not only and narrowly looking at it as a way of identifying the baddie. Speaking to Doctor Who Magazine in 2022, casting director Andy Pryor stated that he is actually intentionally trying to cast more disabled actors claiming that “If you can’t cast diversely on Doctor Who, what show can you do it on?”. This is even reflected in the set design, with the TARDIS now being completely wheelchair accessible. What becomes clear is that the changes made to depicting Davros is a product of the philosophy of change that is woven into the show’s DNA.

The original 1975 story ‘Genesis of the Daleks’, in which Davros first appears, is still available to watch on BBC iPlayer; no attempt has been made to alter the original to remove the problematic depiction of disability. These stories are still there for us to watch and learn from, not to pave over and pretend they didn’t happen. Perhaps this means Davies and the rest of the production team at Bad Wolf will be cautious about featuring Davros again. What we can say is that Doctor Who is a unique icon in the television space in the way it demonstrates how we respond to change.