In much of the work I’ve been involved in, whether writing, coaching, hillwalking, local politics, or international development, I’ve learned to ask questions I don’t have answers to, and sometimes neither do the people I’m with. We sit with the question, decide whether it’s the right one, try to discern what else emerges in our peripheral vision as we focus on it. It takes effort to come to something like an answer, and in doing so, we peel off layers of unknowing. It has taken practice, and it can be slow work. But in searching for good questions, I see they can be an entry point into not just information but wisdom too. And there are many places that are hungry for wisdom.

I longed for better questions and more curiosity when I was a district councillor. Curiosity that made space for residents to share their stories and opinions, curiosity about different political positions and what might happen if we work across divides, about what might be possible if we could get past the way things had always been and imagine what they could become. But the desire to save face, to be seen to be in control, was strong, and I felt it often got in the way of real conversation. To be committed to the process more than the product takes courage, I think. The courage of uncertainty, of saying, “I don’t know”, of putting humility and honesty before status. Sitting with questions can be difficult, perhaps even feeling like a luxury, but they show us ourselves and the world a bit more clearly, offering a pathway to relationship, to collaboration, to humanity, to wisdom.

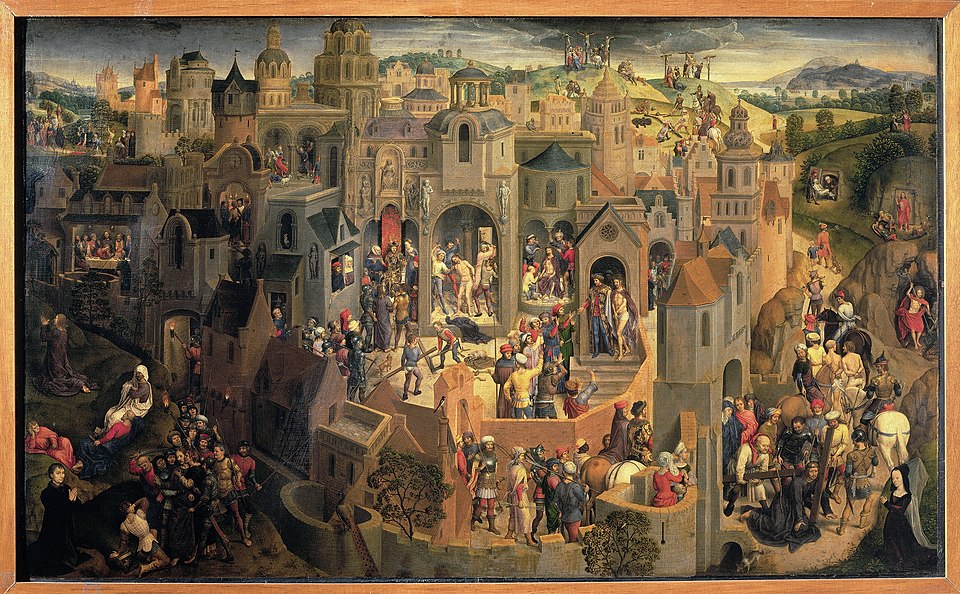

I have been thinking about the temptation to trade the wisdom of questions for the apparent certainty of instant answers, even wrong answers. It is a temptation that, in our age of one-click everything and the importance of image, is only quickening. It is a temptation that I have been thinking about, wondering if it started with that old temptation in the Garden of Eden. Staring at a painting of Adam and Eve in the Prado gallery a while back, I wondered whether that original temptation set us on a path of instant information but also of depleted wisdom.

As I peered into the painting, a thought sparked: what if God told Adam and Eve they could eat fruit from any tree except the tree of the knowledge of good and evil not because he wanted us to stay ignorant or innocent (something that Philip Pullman explores in his Northern Lights books) but because he knew it was too easy for us to eat from that tree. He wanted us to live, and to search for knowing and wisdom ourselves. Eating the fruit would bypass experience, there’d be no need to develop muscles of thinking and discernment. And he wanted us to be wise, to keep creating and tending the world with him. When those first pulled-from-the-earth humans ate the fruit, it was like us still-dependent-on-the-earth humans asking Artificial Intelligence to write us an essay: we might get what we want, but we’ve bypassed the experience of thinking, creating, discerning what’s ours to say.

This analogy creaks when pulled too far, but it lingers all the same. There’s a quote I’ve long appreciated, from the biologist E.O.Wilson:

“We are drowning in information, while starving for wisdom. The world henceforth will be run by synthesisers, people able to put together the right information at the right time, think critically about it, and make important choices wisely.”

Picking the fruit, becoming reliant on AI, gives us information but perhaps not the ability to think, and not the wisdom to make good choices. God wants us to be wise. The Bible’s Book of James says we can ask for wisdom. It is not withheld from us; it is not hidden. It’s everywhere, waiting to be called on.

There are no digital shortcuts to the difficult work of community, no AI-shortcut to loving well, just as there was never a shortcut to complete knowledge of good and evil.

It’s so easy to find answers now — Google solves problems and democratises access to information, unless of course you’re in a part of the world that has no digital access. In rural mid Devon and in rural Zambia, both places I’ve worked deeply with communities, you can’t simply access an online meeting or find the answer to a question you might have. Sometimes this feels a life-giving challenge: it increases the need for relationship, for trust, for community conversation. Other times is hinders progress: it means people can’t access jobs, or basic health knowledge, or government decisions that affect them. Google has changed who can access the world, how we interact with it, how we think and learn. Historically, people memorised poetry and scripture and news. The printing press changed that; words were pulled from minds and printed on paper. Our online existence has accelerated that: I don’t need to stretch my memory if I don’t want — I can find and store what I need digitally. We’ve outsourced our memory, and I wonder whether we’re also outsourcing our capacity to think and discern.

In doing so, we risk disconnecting from ourselves, our relationships, our communities, our places. No longer do we need to rely on each other for knowing and wisdom — we can trust faceless digital forces that profit from us doing so. We risk too our unique ability to think creatively, to discern good sources, to think deeply and with nuance about a topic. If AI learns from everything that has been, it can synthesise and perhaps even extrapolate from that and project forward, but it can’t creatively imagine. It can’t reflect and speak wisdom.

There is an ease and convenience to Google, to AI. There was an ease and convenience to picking the fruit to gain knowledge. But we are not called to ease and convenience. I think we are called to love, to care for our neighbours, and these things are necessarily inconvenient. Digital access to information is a tool, a resource, a gift that benefits many of us in many ways. But it could easily blunt our humanity, becoming a temptation that bypasses the work of truly living. There are no digital shortcuts to the difficult work of community, no AI-shortcut to loving well, just as there was never a shortcut to complete knowledge of good and evil. With information available at the tug of a fruit — a click, a download, a request to an artificial intelligence — I am curious how our ability to sit with questions will change, whether we’ll feel beauty or fear in not having all the answers, whether we’ll lose our ability to discern, and to “have faith in what we do not see.”

Sitting with questions, with curiosity, is I think an entry point to faith and to mystery.

Jesus calls us to questions, to relationship, to love, not to answers that might be easily won but little interrogated. He knew that questions, not answers, were often the best response to questions. Questions to sit with, to hold up as a mirror, to walk as a path to wisdom. He asked a lot of them. Who do you say I am? How many loaves do you have? Do you love me? What do you want? Why are you afraid? The Bible records Jesus asking questions, and sometimes offering answers too. But the point often seems to be the question itself, giving endless chances for people to question their assumptions, and their judgements, and to deepen their faith and make it personal. In doing so, Jesus offered a path to deeper and more meaningful knowledge of God, the world, others, and ourselves. And by asking questions he gave dignity to people, listening deeply to them, loving them, calling them into themselves.

Sitting with questions, with curiosity, is I think an entry point to faith and to mystery. And we have companions as we do this: Jesus, early Christian mystics, prayer, the Psalms, each other – these are all places I turn to dig deeper into the knowing that comes through unknowing. To live with questions and within mystery, to listen deeply to each other, to speak the language of soul rather than certainty, might be difficult and countercultural. But in an age where the future is becoming less certain despite the whole world seemingly at our fingertips, I think it is where our hope is. After all, “what good is it for a man to gain the whole world but forfeit his soul?”