“Sell-out!” When I arrived in Seattle around the turn of the century, I was too tardy and too scrupulously Christian to make much of what was left of the fading grunge scene. Still, I had arrived in time to fumble my way through various university dorm room arguments with more musically astute peers about when this or that band had sold out, when they abandoned the authentic homemade purity of their sound for the greater financial rewards of mainstream pop. These bands still traded on their image as rebels and outsiders, but we all knew it was a pose. Even so, we sympathised. Who wouldn’t be tempted by selling-out if the alternative was poverty and virtuous obscurity?

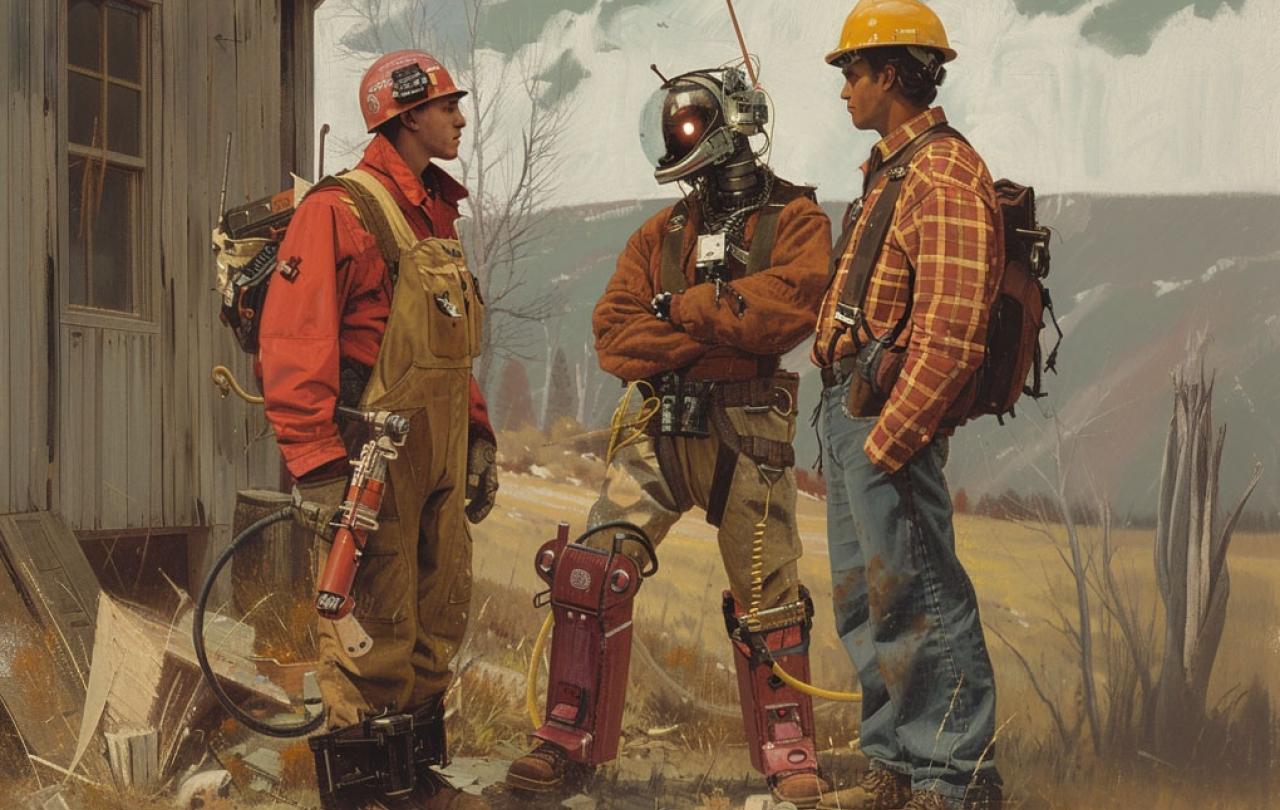

I have been thinking about those conversations recently, not just because Nirvana T-shirts suddenly seem to be everywhere again, but because the other form of ambient idealism circulating in Seattle at that time, techno-utopianism, seems to have reached the end of its own version of selling out. In my decade in Seattle, I learned to scoff at those who didn’t embrace new technologies, dutifully parroting the slogan, “Information wants to be free!” The hackers of my generation had founded companies which were going to remake the corporate world. Many of my friends from university went to work for them. They were excited about building exciting new tools at lower costs, while doing it all with a social conscience. The bosses of these companies were rock stars in T-shirts and jeans, changing the world.

Two decades later, few of us are happy with the world they’ve built. The professions threatened by their innovations started with music and journalism and have now moved on to just about anything that an AI can imitate. Many of those bosses are still in T-shirts and jeans, still pretending to be outsiders, even as their wealth has piled into unimaginable sums. Their continual need for more has led many of them to decide that a social conscience is too expensive a liability to retain. They prioritise profits and share prices above employee well-being and social cohesion. Some demur from taking stands against authoritarian politicians, pretending that such neutrality is a matter of principle and not economic self-interest. Others openly egg on our broken politics, eager to snatch still more spoils from their demise.

What has gone wrong? As an ethicist, my temptation always is to say that if only these bosses were better advised, reminded of the responsibilities of their power, things could change. What is a skilled ethicist if not someone whose rhetoric and erudition can move the hearts of the mighty?

There is one immovable object that all his ethical demolition work could not shift. His king had palaces to build, heretic German princes to bring to heel, and an ancestral homeland to recapture.

At the advent of European colonialism, there was perhaps no more skilled or erudite moral theologian than Francisco de Vitoria. After taking the premiere professorship at the best university in Spain, what was becoming the richest state in Europe, he pioneered legal and ethical theories which reverberate in international and human rights law today. There were few more incisive critics of the self-deceptive rationalisations of his contemporaries and few better placed to have the ear of one of the most powerful rulers of the age, the king of Spain and Holy Roman Emperor, Charles V.

Today Vitoria is often pointed to as a prophet, someone who drew on his theological expertise and rhetorical acumen to tear apart Spanish justifications for their growing overseas empire. This reputation largely rests on his On the American Indians, a speech he gave in response to the horrific reports of “bloody massacres and of innocent individuals pillaged of their possessions and dominions” which were filtering back to Spain. In it Vitoria does indeed dismantle dozens of quasi-legal entitlements to which the Spanish appealed to justify these actions. By the time he reaches the end of the speech he even seems to be contemplating Spain abandoning the Americas. He says, “The conclusion of this whole dispute appears to be this: that if all these titles were inapplicable…the whole Indian expedition and trade would cease”.

However, when he turns to acknowledging the financial implications of this, he allows that it “would mean a huge loss to the royal exchequer, which would be intolerable.” Here Vitoria concedes that there is one immovable object that all his ethical demolition work could not shift. His king had palaces to build, heretic German princes to bring to heel, and an ancestral homeland to recapture from the French. Money was needed for Charles to play his role as a king among kings, and no ethical quibbles about evil deeds carried out far away could be allowed to impede its flow. After this admission Vitoria sputters to a conclusion with a few unworkable and naive suggestions about how to at least make colonialism marginally less terrible.

If there is a historical parable calculated to drive an ethicist to despair this is it. It shows ethical reflection for what it all too often is, an ineffectual expression of moral anxieties we air and then largely ignore. Our institutions, whether nation-states or companies, make a show of acting ethically, but few of us are fooled. It is a pose. The sorts of ‘ethics’ practised by countries and corporations are strictly those which aren’t a serious threat to the appetites of their leaders for more wealth, power, and security. Like Charles V, they too have peers among whom it is intolerable to contemplate losing status.

These priorities are reflected even among those of us with less stratospheric power or wealth. Many of us worry about the origins of our food, our clothes, and our cheap electronics, having heard stories of labourers spending long hours in fields or cramped sweatshops. We may even buy Fairtrade as a response, but only if the price isn’t too high and if this ‘ethical consumption’ doesn’t mean giving up our middle-class lifestyle.

The ‘ethics’ of our consumption are kept on a convenient lead. They are allowed to nibble around the edges of our consciences, but never to tear into the heart of the way we inhabit the world.

In his work, Whose Justice? Which Rationality?, Alasdair MacIntyre argues that the sorts of goods we pursue can be lumped into two broad categories, goods of effectiveness and goods of excellence. The former are the things like wealth, power, and fame, which can be conferred and even sometimes transferred and which bear little relation to the characters of the people involved. The latter are the sorts of skills and performances, the virtues and virtuosities, which people attain through long and disciplined development.

For MacIntyre, both kinds of goods are necessary, but it matters a great deal which one gets priority. In a society which prioritises goods of effectiveness – such as Vitoria’s, but also, for MacIntyre, most modern societies as well –procedural justice reigns supreme. As long as we didn’t break any rules in getting our money and status or, for that matter, our exciting new clothes or smart speakers, we are in the clear. The problem, as Vitoria’s case demonstrates, is that in such societies even this minimal kind of justice cannot be allowed to block the flow of wealth. So procedural justice winds up being a tamed tiger in the service of the powerful. It is let out of its cage only when convenient – typically to demonise the failings of others. This is not just true of billionaires and politicians. Those of us who are western, middle-class consumers play this game too. The ‘ethics’ of our consumption are kept on a convenient lead. They are allowed to nibble around the edges of our consciences, but never to tear into the heart of the way we inhabit the world.

What would it mean to prioritise goods of excellence? This is one of those questions MacIntyre poses, but does not answer, because he is convinced that in each society it would look different. Each community would need to begin by wrestling with what kinds of people they should be, what excellences they can and should pursue within their communities, and what virtues should be emphasised. Only then should they move on to think about what sorts of wealth or power are necessary to achieve these. Still, it can be frustrating that MacIntyre does not lay out his preferred programme. He offers no ready-made blueprint for a just society.

Of course, neither did Jesus when he counseled his disciples to seek first the kingdom of God, telling them that if they did so the necessities of life, food, drink, and clothes, would be provided. What Jesus meant by the kingdom of God is elusive, now and not yet, hidden and revealed in parable and aphorism. What it was not, however, is clear. It was not a kingdom founded on acquiring earthly power and wealth. In fact, much of the teaching of the gospels can be boiled down to Jesus’ warning about the dangers of prioritising the goods of effectiveness (“Where your treasure is there your heart will be also”, “One thing you lack. Go sell everything you have and give to the poor”, “The rulers of the Gentiles lord it over them. Not so with you”, “Man does not live on bread alone”, “What does it profit a man to gain the whole world and lose his soul?”) and urging his disciples to embrace the goods of excellence that constitute the kingdom of God.

Understood this way, the reason it is easier for a camel to go through the eye of a needle than for a rich man to enter the kingdom is that the hunger for riches, wealth, and fame pulls those enamoured with goods of effectiveness away from true fulfillment. There are always more houses to own, new neighbours to impress, and new areas to conquer. Acquisitiveness, MacIntyre reminds his readers, is the characteristic vice of modernity. That many of us, from billionaires down to underpaid academics, habitually think that what is missing from our life is a little more money or fame, is evidence that he’s right.

For Jesus, virtuous obscurity and poverty were preferable to fame. We remember this at every nativity play when we acknowledge that the best God could manage for witnesses to the divine arrival was a hard scrabble group of animal herders and a few foreign astrologers. It is not that Jesus refused to use his abilities or hid away from public notice. However, the public he chose to act among was nestled in a corner of a corner of the empire, far from the rewards offered by the cultured salons of Roman power and privilege. In two of the gospels, Jesus is tempted to sell out. He is offered unimaginable fame and power at the outset of his ministry. He forcefully rejects it. For Jesus, an itinerant life spent ministering to fishermen and farmers was enough.

What would it look like for us to embrace Jesus’s priorities? A place to start would be actually listening to Jesus about practices such as fasting, praying, and the almsgiving. Each of these is an act of resistance against the continual appetite for more and a testimony to an economy of grace that exists beyond all human economies. We also could try preaching in a way that takes seriously the admonitions of Jesus. I have heard numerous sermons about the rich young ruler which include an extended caveat on how maybe it was important for him to sell his possessions, but that doesn’t mean we have to. Maybe not, but shouldn’t those of us who call ourselves Christians, at least be open to God having that radical a call on our life? If our ethics and our faith are not allowed to ask these questions of us, if we have sold out in such a way that the real possibility of them radically disrupting our lives is intolerable to our imagination, what good are they?

Join with us - Behind the Seen

Seen & Unseen is free for everyone and is made possible through the generosity of our amazing community of supporters.

If you’re enjoying Seen & Unseen, would you consider making a gift towards our work?

Alongside other benefits (book discounts etc.), you’ll receive an extra fortnightly email from me sharing what I’m reading and my reflections on the ideas that are shaping our times.

Graham Tomlin

Editor-in-Chief